For folks that went to Inspire 2023 there was a session about SCORM, xAPI and Content practices in general.

It was pretty good - we were walk through best practices of generating content and how to work with it.

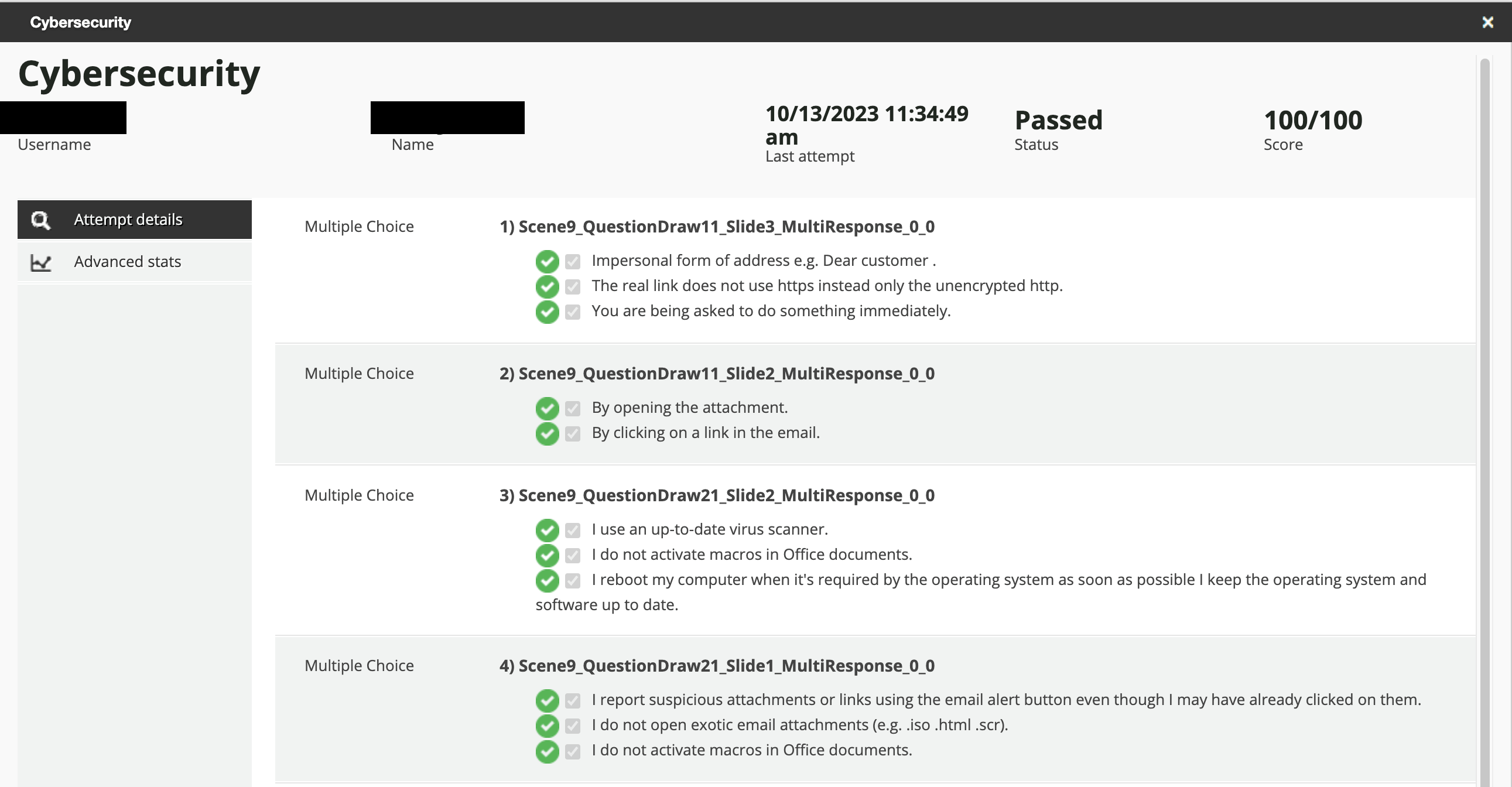

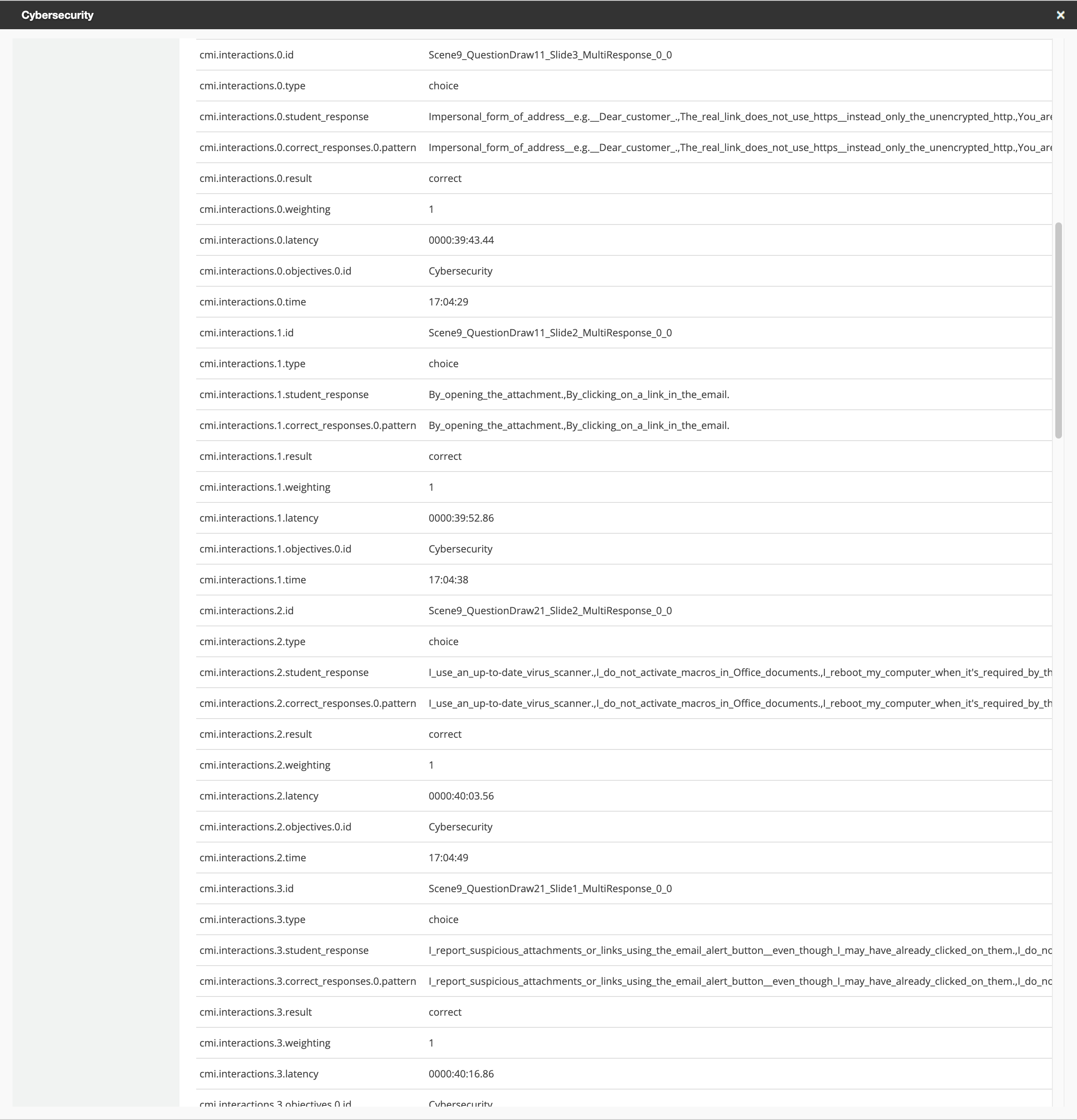

At one point I raised my hand and asked how you get that fancy question level type of CMI interactions into the system where it is a human readable format for consumption (essentially a training material question/answer level report). And

I am here to say that

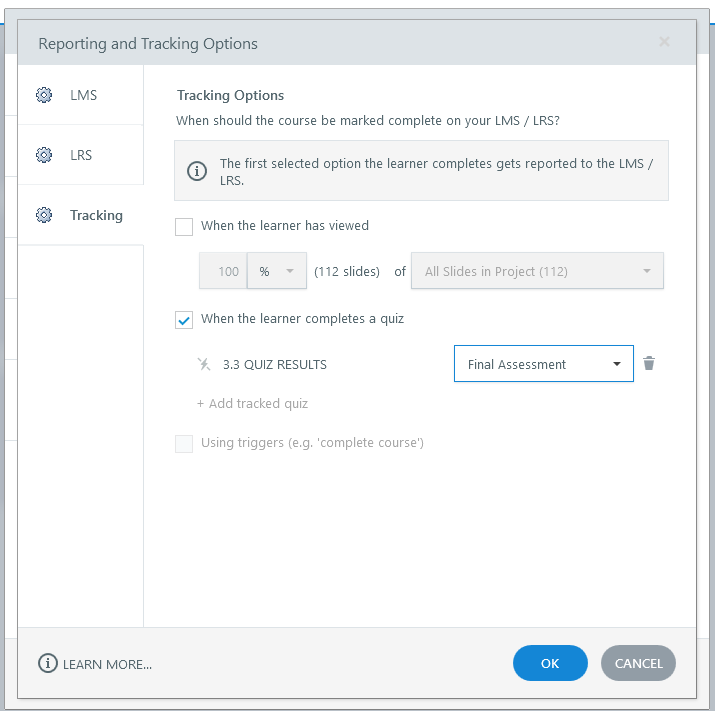

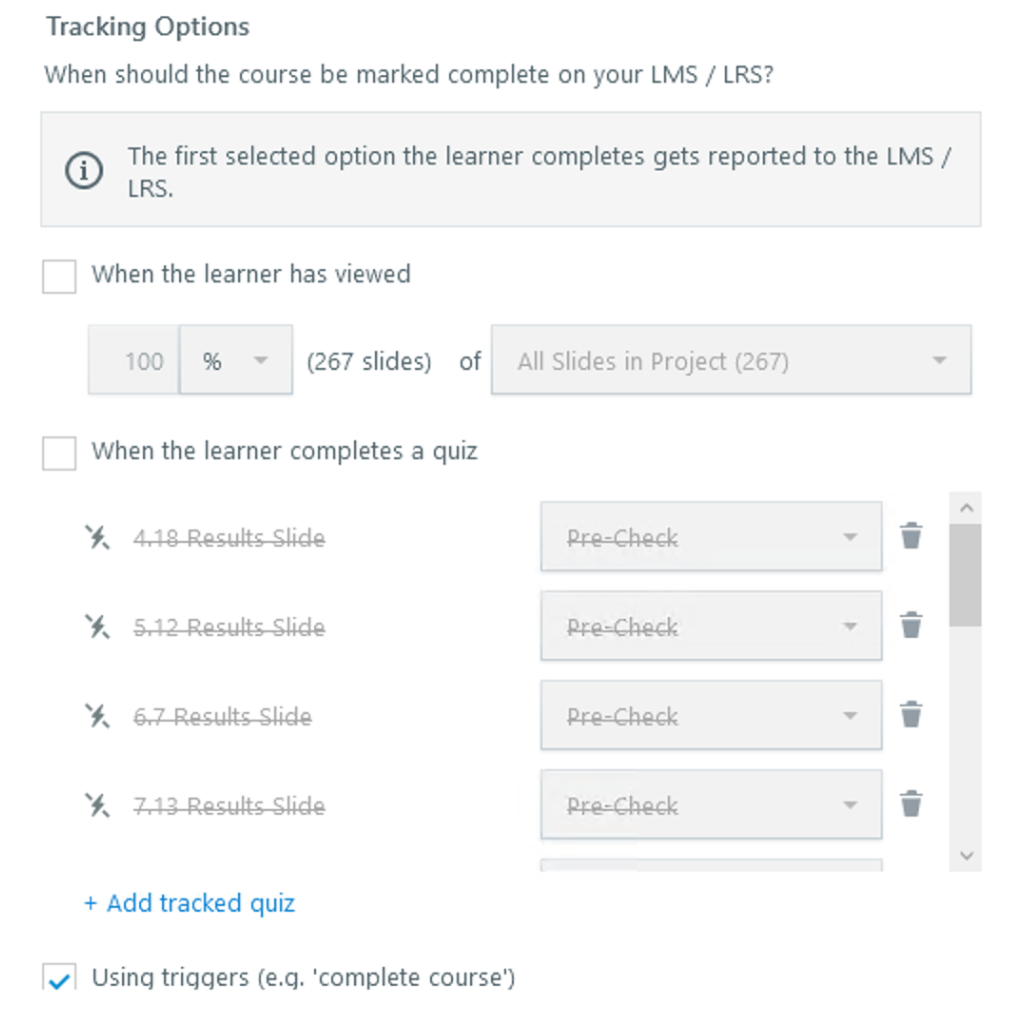

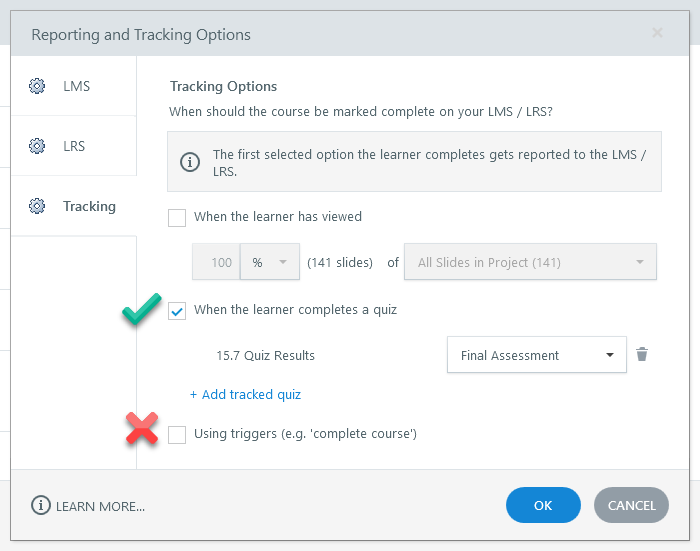

First and foremost, where I was going wrong? Do not set a trigger to complete your course. You want the completion criteria to be a person passing a quiz.

Now in practice I began doing that maneuver years ago, but I wished someone walked me through it or I looked into it a little more before adopting the approach. The short share is that Articulate and Rise had to both live through a transition out of Flash and fully into HTML 5. There was a time (and I am sorry I can only speak to it anecdotally as I was troubleshooting less and leading more) where it was a bit rocky to count on those CMI interactions - none the less to get the completions successfully recorded in your LMS.

To help? I believe authoring systems like Articulate Storyline and others took a step back and said “hey if they want a single step to trigger a completion? Let's give it to them”.

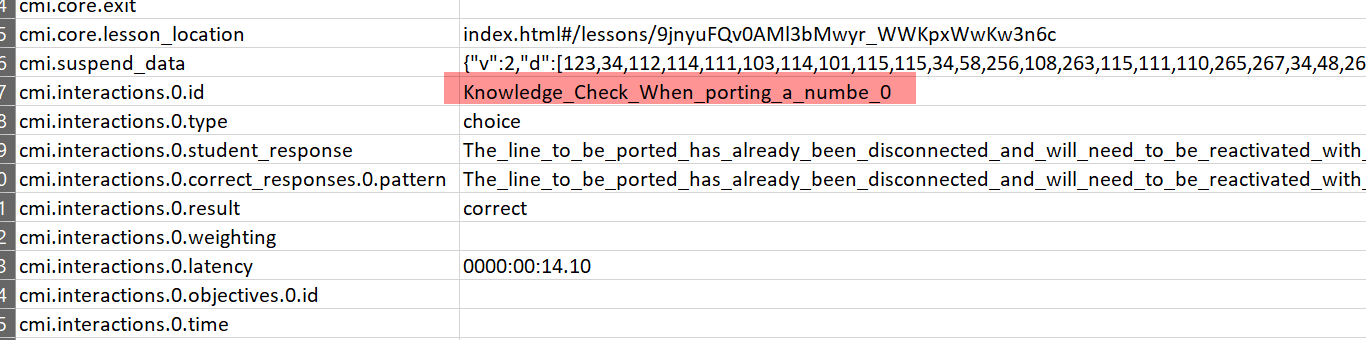

Here is the thing - using that trigger? Transmits no deeper CMI interactions to the system...in essence? You have short cut all of the data your course could be collecting and told the LMS the person is done with that click….and that is about it. In fact? The CMI interaction “payload” that is submitted looks like SCORM 1.2 gibberish.

So - hopefully someone will read this and gain from this. If you want those interactions? Question and Answer level data? Then the moral of the story is Single Trigger = Bad. When the learner completes a quiz = good.

Are there caveats to the approach? Well - there are a few:

- SCORM can be flawed with how it suspends and picks up a score from a quiz that was suspended in the middle of the quiz.

- When you transmit more data via SCORM? It can be more verbose. And you can use that to your advantage (especially in Captivate where a publishing option is to send interaction communications or something like that). The flaw is that SCORM actually counts on constant communication between the SCO and the LMS - or the course can be left in a “bad state”.

- Depending on the user's connectivity (and work from home/remotely can severely challenge this), the user could be attempting to take the course where it doesn't stand to have a chance for that solid LMS to SCO communications.

- For long (duration) SCOs, you can find the LMS will go out of session before the course is triggering CMI interactions for its quiz.

- Editing a SCO that is “in flight” (live in production) can be hazardous to the health of the SCO and your learning campaign. Never mess with the structure of a SCO that is published without telling to yourself, why is it getting hot in here?. Structural details are details that negatively impact the xml manifest, including deleting expected slides, changing question and answer order or answer counts, etc. In Docebo, you can adopt hiding the older sco and importing a newer training material...but that approach has some caveats too (another article, another story).

So good luck with this - I hope someone gains from the stub and the conversation with another SCO enthusiast in the community.