We're opening a private beta for Custom Rubrics in AI Virtual Coaching and we're inviting customers.

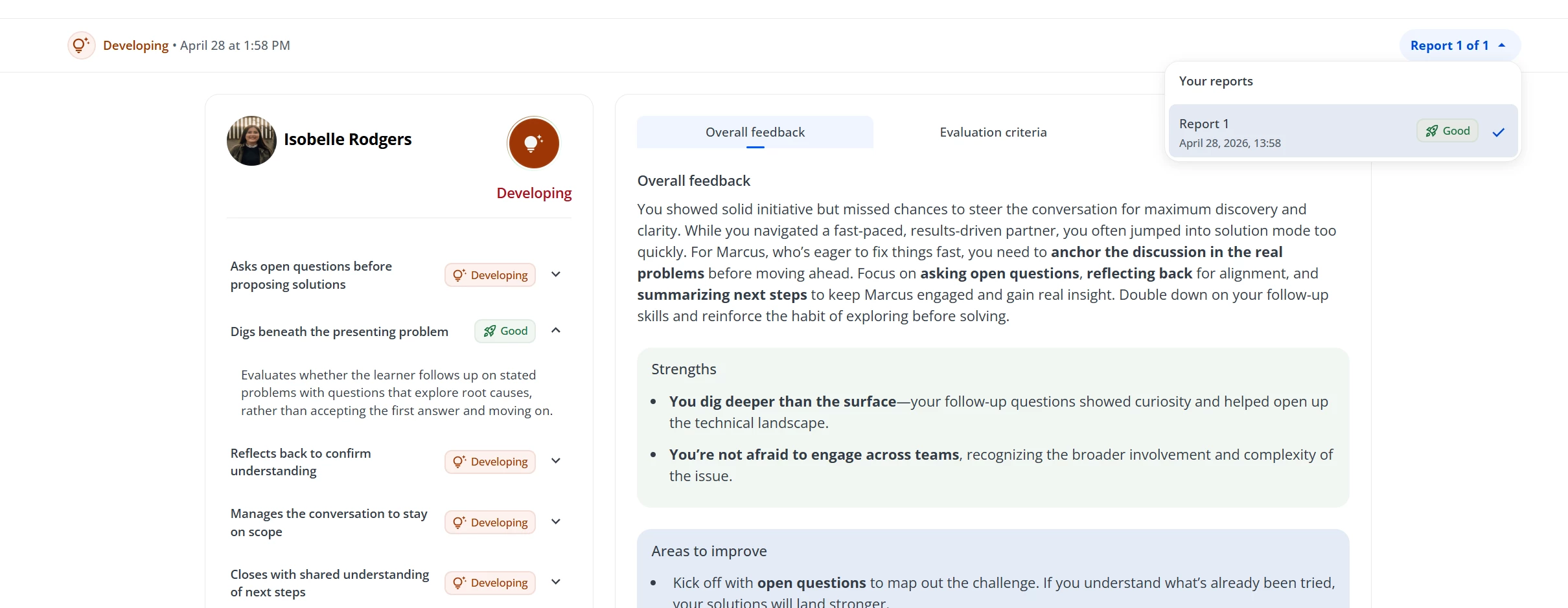

Right now, AIVC evaluates every simulation against the same default criteria. Custom Rubrics changes that. You define what 'good' looks like for your specific scenario, AI evaluates against it, and learners see feedback tied to the criteria that matter for your organization.

- Phase 1 (April 14): Stop evaluating against generic defaults. Build a rubric with up to 5 criteria that reflect your actual training standards, each with observable examples of strong and developing performance. A default rubric ships on every new scenario so you're never starting from scratch.

- Phase 2 (May 11

April 28): Human review enters the loop. Assign reviewers to any scenario and let learners submit their best attempt for human evaluation. Reviewers receive the full AI report as a starting point, then add their own judgment on top.

What we're asking of you

- Create at least one new scenario and configure a custom rubric

- Run at least 10 simulations and review the learner reports

- Complete two short feedback surveys

- Share what surprised you, where the AI nailed it, and where it missed

Time commitment: roughly 6–8 hours across 4 weeks.

What you get

- 2,200 complimentary AI credits (enough for 20+ full simulations) to the first 20 participants

- Direct access to the product team throughout the beta

- Your feedback will directly shape the GA release

The beta starts April 14. To apply, complete this survey.

Questions? Drop them below.